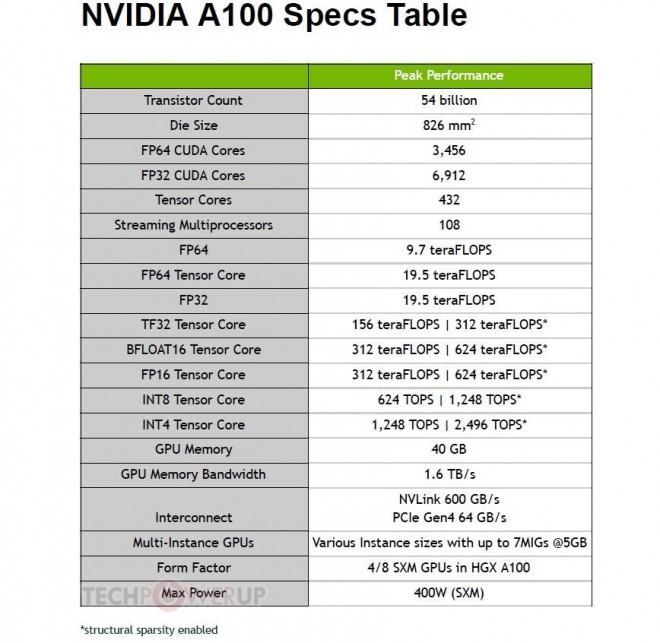

While the sparsity feature more readily benefits AI inference, it can also improve the performance of model training. Tensor Cores in A100 can provide up to 2X higher performance for sparse models. Not all of these parameters are needed for accurate predictions, and some can be converted to zeros, making the models “sparse” without compromising accuracy. A100 delivers 1.7X higher memory bandwidth over the previous generation.ĪI networks have millions to billions of parameters. Lambdas GPU benchmarks for deep learning are run on over a dozen different. With up to 80 gigabytes of HBM2e, A100 delivers the world's fastest GPU memory bandwidth of over 2TB/s, as well as a dynamic random-access memory (DRAM) utilization efficiency of 95%. NVIDIA A100 Tensor Core GPUs and the HGX A100 4-GPU baseboard. MIG gives developers access to breakthrough acceleration for all their applications, and IT administrators can offer right-sized GPU acceleration for every job, optimizing utilization and expanding access to every user and application. NVLink is available in A100 SXM GPUs via HGX A100 server boards and in PCIe GPUs via an NVLink Bridge for up to 2 GPUs.Īn A100 GPU can be partitioned into as many as seven GPU instances, fully isolated at the hardware level with their own high-bandwidth memory, cache, and compute cores. When combined with NVIDIA NVSwitch™, up to 16 A100 GPUs can be interconnected at up to 600 gigabytes per second (GB/sec), unleashing the highest application performance possible on a single server. NVIDIA NVLink in A100 delivers 2X higher throughput compared to the previous generation. That's 20X the Tensor floating-point operations per second (FLOPS) for deep learning training and 20X the Tensor tera operations per second (TOPS) for deep learning inference compared to NVIDIA Volta GPUs. NVIDIA si conferma leader nel benchmark MLPerf, con vari record di prestazioni nel settore per il training con IA. NVIDIA A100 delivers 312 teraFLOPS (TFLOPS) of deep learning performance. A100's versatility means IT managers can maximize the utility of every GPU in their data center, around the clock. 3090 is the most cost-effective choice, as long as your training jobs fit within their memory. A6000 for single-node, multi-GPU training. Whether using MIG to partition an A100 GPU into smaller instances or NVLink to connect multiple GPUs to speed large-scale workloads, A100 can readily handle different-sized acceleration needs, from the smallest job to the biggest multi-node workload. Three Ampere GPU models are good upgrades: A100 SXM4 for multi-node distributed training. With the new Multi-Instance GPU (MIG) capabilities in Ampere GPUs, A100 can create the best virtualized GPU environments possible for Cloud Service Providers. Built on TSMC 7nm N7 FinFET, A100 has improved transistor density, performance, and power efficiency compared to prior 12nm technology. The NVIDIA A100 GPU is engineered to provide as much AI and HPC computing power possible with the new NVIDIA Ampere architecture and optimizations.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed